For publishers and media companies struggling to cut through the digital noise, optimizing the performance of digital campaigns is one of the most essential – and most challenging! – components to reaching audiences, maximizing engagement, and driving results.

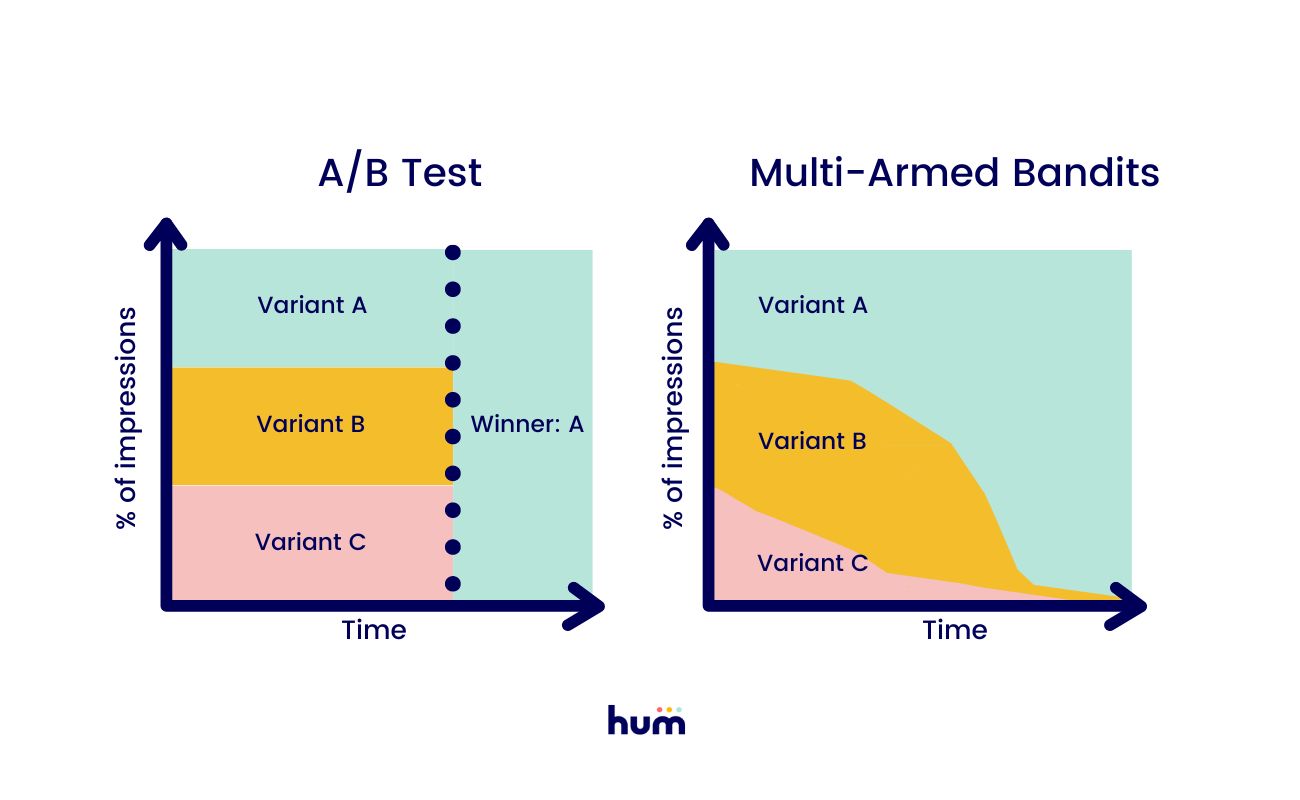

Traditional A/B testing requires large sample sizes to deliver statistically significant results - a luxury many publishers simply don't have when they’re delivering campaigns to highly specialized audiences. So while you’re collecting data about which message resonates more with your audience, you’re also feeding traffic to less effective options until a single winner is declared.

When every impression matters, you can’t afford to waste time or opportunities sending a significant percentage of your audience a sub-optimal experience.

Enter: The multi-armed bandit approach (MAB). A smarter, more efficient way to maximize campaign performance.

What is a Multi-Armed Bandit Algorithm?

Unlike traditional A/B testing, MAB uses machine learning algorithms to dynamically shift traffic towards better-performing variants. Where A/B testing is about determining a winner, MAB is about getting you as many conversions as possible for your campaign.

Imagine you have 50 tokens to use in an arcade, and you want to win as many tickets as possible.

If you took an A/B testing approach, you’d need to play each of the three games in the arcade ten times to determine which game you are the best at. Only after you’d played each game ten times would you spend your 20 remaining tokens on the game that netted you the most tickets.

With MAB, you throw out the notion that you need to spend 30 tickets upfront to find a winner. Instead, you play at random for a bit. You might play a game or two of Zombie Snatcher, then move on to Skee-Ball. But if you notice after 3 or 4 games that Zombie Snatcher seems rigged, while you consistently win several tickets on Skee-Ball, you stop spending your effort and your tickets on Zombie Snatcher.

You might come back to it later, just to make sure you weren’t unlucky at the start. And you’d keep an eye on your wins at Skee-Ball to make sure things don’t fall off after a stroke of early luck. Overall though, in just a few games you’ve found that your tokens are better spent on Skee-Ball than on Zombie Snatcher.

You didn’t waste 30 tokens up front figuring that out. You noticed quickly and adjusted.

Adapting to Evolving Trends

MAB dynamically shifts more impressions to the better-performing campaign as users interact with your campaign in real-time, meaning more of your readers will see the best campaign.

How does MAB choose a top performing variant? That depends on overall conversion rate, the difference in conversion rates across variants, and the number of variants being tested.

When you launch a campaign with multiple variants, impressions will be served to variant A and variant B (and C, D, or E!) in an even split.

The algorithm updates the odds ratios with every single impression and conversion, reacting as more and more users interact with it. As the number of impressions increases, the impact that a single impression can have on the balance between variants decreases and the campaign begins to push more people towards the better-converting campaign.

Multi-Armed Bandits in Hum

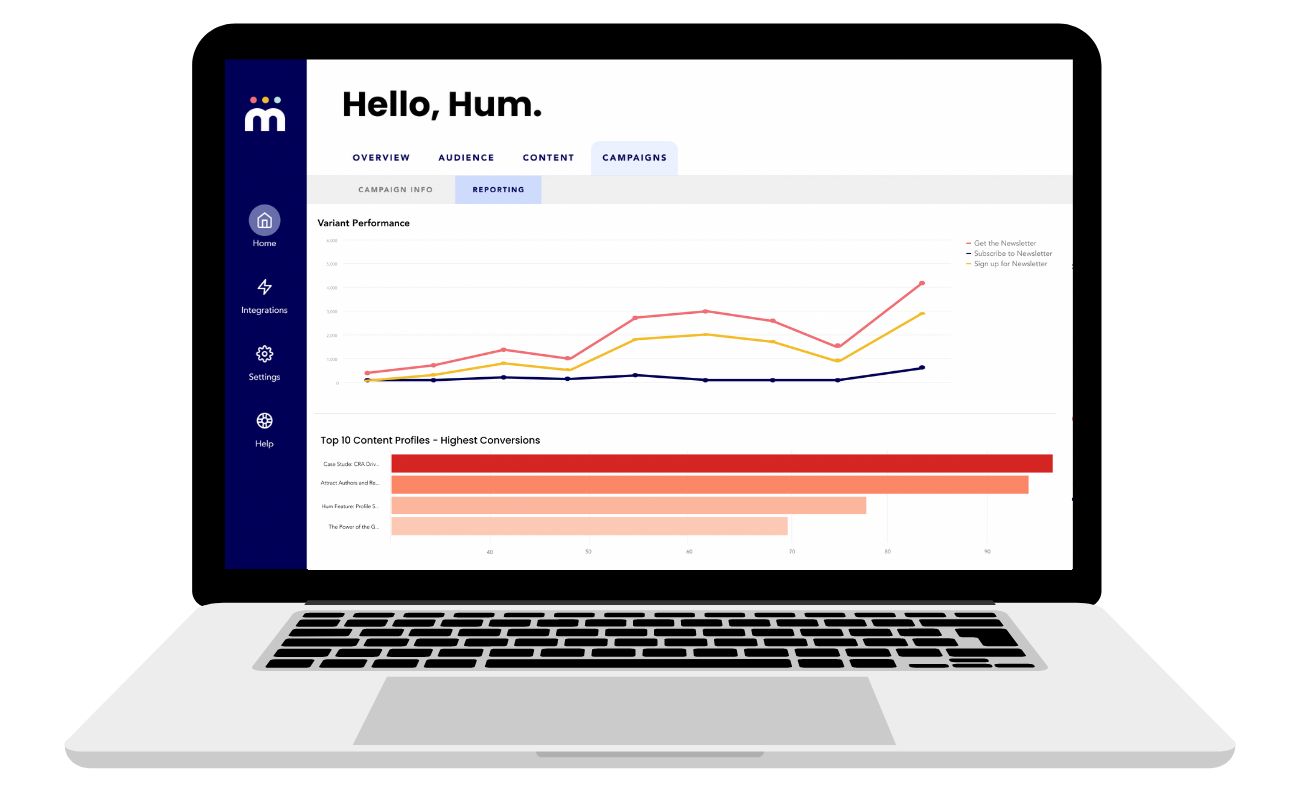

Hum now includes the ability to create up to 10 variants for Live Engagement Campaigns.

Marketers are able to test multiple elements simultaneously – including prompt positioning, headline and body copy, images, and calls to action – to find the most effective combination.

Hum uses real-time user behavior to optimize delivery of the variants within each campaign, meaning your campaigns will:

- Automatically funnel more traffic towards the more effective experience, maximizing the number of audience members who will see the top-performing campaign.

- React to changes in audience dynamics if variant preference changes over time.

- Allow marketers to use variants on campaigns with lower traffic than might be needed for traditional A/B testing.

As with all Live Engagement Campaigns in Hum, you can set multi-armed bandit campaigns to show to specific audience segments and/or on specific pages. And Hum’s reporting shows you variant performance over time, so marketers can do detailed analyses of campaign performance and develop best-practices for other, future campaigns.

(Hum still can’t improve your odds of winning Zombie Snatcher, but we’re working on it.)